This piece ran in newspapers in early July, 2022.

****************

I regularly ask Siri (on my iPhone) to answer questions for me. “What’s the current barometric pressure?” “How tall was John Wayne?” “How long does it take to drive from here to Blackwater Falls State Park (in WV)?”

She’s often quite helpful.

Sometimes, after she helps, I say “Thank you.”

I’m always aware that there’s something ridiculous about my thanking a piece of programmed software, about my responding to Siri as if she were what she sounds like — a woman with a nice voice — despite my knowing that Siri is really only an extraordinarily clever “machine” developed by a long line of intelligent people.

My impulse to thank Siri reminds me that there has long been something in me that occasionally relates to something in the inanimate world as if it had feelings of which one should be considerate.

When I was six, I recall, my parents were talking about our couch, about how unappealingly slovenly it had become, ripe to be junked and replaced.

I had feelings for this couch because it was on that couch that we as a family would have our coziest moments.

So when I heard my parents talking that way, I called out to them not to have that kind of conversation right there in front of the couch. They’ll hurt its feelings.

From my study of anthropology, I believe that this way of relating to the inanimate — as if it were animate — is etched into human nature. In cultures all over the world, people have related to rocks or mountains or rivers or the sun, as if it had the attributes of a sentient being—i.e. something that experiences things, and can have a relationship of some sort with human beings.

Although I know that Siri is just “a thing,” I will continue to thank her because it feels right: I don’t want to get into the habit of talking to something — that, like Siri, comes across as such a being — as merely an ends to meeting my needs.

Mere means to end – that’s an ugly thing seen in how some people have treated their servants and their slaves. If I had a servant, I would want the relationship to include more basic human respect and caring than that.

But Siri brings up another big part of this issue. Many people in the Artificial Intelligence (A.I.) field are raising the question: will there eventually be, in the human future, human-created machines that are beings that experience their existence, with some experiences feeling better to them and some worse? Being about whose feelings one should care.

That couch may not have had feelings to hurt, but various movies these days — like I Robot, Blade Runner, Her, and A.I. – depict the machines that are not “just machines” but have an internal experience which makes it matter how they are treated.

It all raises a question about whether it is possible for “inanimate” objects that are composed entirely of the material stuff of the world – to achieve all the crucial things we achieve as living animals and sentient beings.

Some people believe that there’s an unbridgeable divide here, between the material and the experiential. They do not believe that human consciousness can be a function simply of the material stuff of which we are made.

- Many religious people point to the soul, regarded as from a different realm, existing apart from the body.

- Many philosophers ponder what they call “the hard problem of consciousness” according to which it is a great mystery how a living thing, like a human being, can have a body that provides the capacity for “subjective experience.”

Other thinkers, meanwhile, believe it’s no problem, no unbridgeable gap. In their view, there’s no reason why – among all the other valuable functions living organisms have evolved to perform – the capacity for experience wouldn’t also have arisen through the evolutionary process.

An implication of that view is that there would be nothing inherently impossible about humans creating machines – out of inanimate material — that are also our fellow-beings. For indeed – according to the scientific understanding of the development of life on earth – evolution does seem to have built consciousness up from the inanimate.

Wherever one wants to start, from the beginnings with non-biologically formed organic molecules, up through the first self-replicating life-forms, and onward to multi-celled organisms, it is clear that – at some point – life developed creatures that are conscious, that have experience, that have feelings. Like us.

And it’s clearly not only humans, either, who possess “consciousness” in that fundamental sense of “having experience” whose quality matters to them. Any owner of a pet cat or dog knows they have such “consciousness.” (And who knows how many other species of life have experience that matters to themselves?)

So it seems plausible that someday there will be things humans have made from “inanimate” material who are beings whose feelings we are morally obligated to take into account.

I hope by then the human world will have become better at “Do unto others as you would have them do unto you,” i.e. at treating well other human beings whom we know are beings like ourselves.

******************

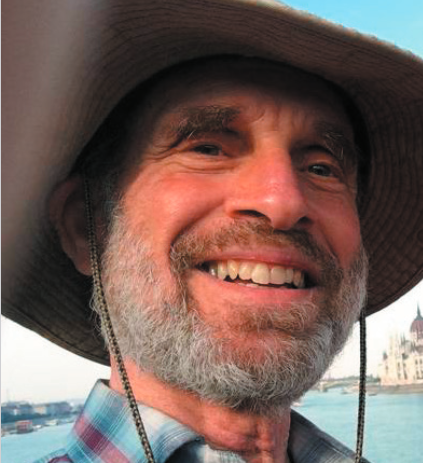

Andy Schmookler’s The Experience of Meaning can be read on his website, ABetterHumanStory.org.

A Mind-Blowing Collaboration Between a Human and an AI

A Mind-Blowing Collaboration Between a Human and an AI My Op/Ed Messages

My Op/Ed Messages Andy Schmookler’s Podcast Interviews

Andy Schmookler’s Podcast Interviews The American Crisis, and a Secular Understanding of the Battle Between Good and Evil

The American Crisis, and a Secular Understanding of the Battle Between Good and Evil None So Blind – Blog 2005-2011 on the rising threat to American Democracy

None So Blind – Blog 2005-2011 on the rising threat to American Democracy How the Market Economy Itself Shapes Our Destiny

How the Market Economy Itself Shapes Our Destiny Ongoing Commentary to Illuminate the American Crisis

Ongoing Commentary to Illuminate the American Crisis What’s True About Meaning and Value

What’s True About Meaning and Value Andy’s YouTube Channel

Andy’s YouTube Channel The Fateful Step

The Fateful Step How the Ugliness of Civilized History is not Human Nature Writ Large

How the Ugliness of Civilized History is not Human Nature Writ Large Major Relevant Essays

Major Relevant Essays Healing the Wounds, Inflicted by the Reign of Power, that Drive Us to War

Healing the Wounds, Inflicted by the Reign of Power, that Drive Us to War Our Life-Serving Inborn Experiential Tendencies

Our Life-Serving Inborn Experiential Tendencies A Quest to Bridge America’s Moral Divide – 1999

A Quest to Bridge America’s Moral Divide – 1999

The Heirloom Project

The Heirloom Project

It is pretty clear that your thinking here is correct. Humans are a part, the end part, of the mammalian animal spectrum, so that answers the question of whether material life can produce conscious life. (Mysterious as it may still be!)